The Geometry of Intelligence: What Lagrange Can Teach Modern AI

- saurabhsarkar

- 12 hours ago

- 6 min read

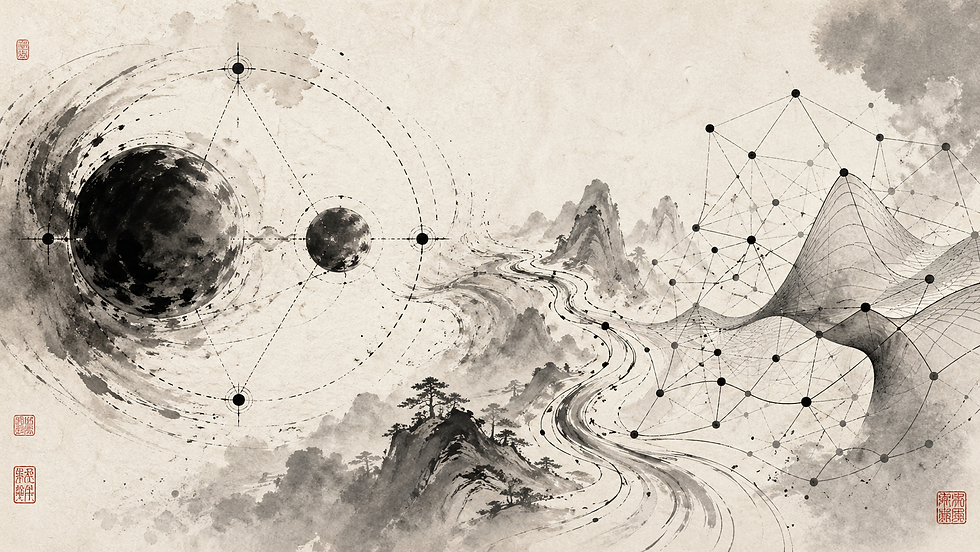

In 1772, Joseph-Louis Lagrange solved a piece of the three-body problem that had stumped everyone before him. He didn't solve it by tracking forces. He solved it by finding the five points in the gravitational field of two large bodies where a third, smaller body can sit stably - held in place not by any single pull, but by the balance of all of them.

These are the Lagrange points. They are where constraints carve stable regions out of a space that would otherwise be chaotic.

That is a useful image to keep in mind, because modern AI has its own version of the three-body problem. Models now operate in spaces with billions of parameters, learning from data that is sparse, noisy, and incomplete, while being asked to obey physical laws, fairness rules, safety limits, and business logic - often all at once.

For most of the last decade, machine learning has treated this as a prediction problem. Give the model data, measure the error, adjust the weights, repeat. That playbook produced deep learning, large language models, and most of the AI economy. But it also produced a quieter problem: a model that only learns from data becomes good at interpolation without understanding the structure underneath. It fits the dots without knowing the shape of the surface.

The fix, increasingly, comes from an idea Lagrange formalized 250 years ago.

From prediction to constrained learning

Traditional machine learning asks: what prediction minimizes error? Constrained learning asks a harder question: what prediction minimizes error while still obeying the rules of the system?

The difference sounds small. It is not.

A model predicting the motion of a pendulum should not just match past observations. It should respect conservation of energy. A model approving loans should not just maximize repayment prediction. It needs to satisfy fairness, explainability, and compliance constraints. A model controlling a robot should not just choose the locally optimal next action. It must stay inside physical and safety limits.

Lagrange's machinery handles this directly. A constrained objective looks like this:

L(x,λ)=f(x)+λ⋅g(x)Here f(x)f(x) f(x) is what we want to optimize — typically the loss. g(x)g(x) g(x) is the constraint: a law of physics, a fairness condition, a smoothness requirement, a business rule. And λ\lambda λ, the Lagrange multiplier, controls the trade-off between them.

The multiplier is the part that matters. It is not a mathematical ornament. It is a price.

A low λ\lambda λ tells the model: focus on accuracy, treat the constraint as a suggestion. A high λ\lambda λ tells it: accuracy matters, but not if you break the rules. As λ\lambda λ rises, the model gives up some fit on the data to stay closer to the constraint. As it falls, it does the opposite. The Pareto frontier between accuracy and structure is traced out by sweeping λ\lambda λ.

That single knob is doing a lot of work. It turns governance — how much do we care about this rule versus that outcome — into a number the optimizer can act on.

A worked example: predictive maintenance on the factory floor

This is easiest to see in a setting Phenx clients live in every day.

Suppose you are predicting bearing failure on a critical pump. You have three years of vibration data, temperature readings, and a small number of recorded failures — maybe a dozen, because the plant is good at maintenance and failures are rare. You want a model that flags impending failure early enough to schedule the repair, but not so often that maintenance crews start ignoring the alerts.

A pure data-driven model trained on this is going to be jagged. Twelve failures in a multi-dimensional sensor space is not enough to learn a smooth decision boundary. The model will memorize idiosyncrasies of those twelve events and produce sharp, overconfident predictions in their neighborhood, with very little to say everywhere else.

Now add structure. Bearings degrade according to known physics: vibration energy in certain frequency bands grows monotonically as the bearing wears, temperature rises with friction, and the relationship between RPM and expected vibration is governed by equations the reliability engineers already have on a whiteboard somewhere.

Encode those as constraints. Roughly:

The first term says: match the failures you've seen. The second says: predicted risk should grow over time the way physics says it grows, not bounce around. The third says: nearby sensor readings should produce nearby risk scores.

Now the multipliers do real work. If you set λ1\lambda_1 λ1 and λ2\lambda_2 λ2 to zero, you get the brittle twelve-failure model. If you set them very high, you get a model that essentially ignores the data and predicts the textbook degradation curve for every bearing. The interesting region is in between, and you can find it empirically: sweep λ\lambda λ, measure performance on a held-out set of failures, watch the curve.

What you typically see is that constrained models outperform unconstrained ones on the things that matter operationally — false alarm rate, lead time before failure, stability across pumps the model has never seen — even though they look slightly worse on the narrow metric of "fit the twelve failures perfectly."

This is the practical payoff. In low-data environments, intelligence comes less from adding more data and more from adding better structure. The constraint does not create new failure examples. It does something almost as useful: it limits the number of worlds the model is allowed to believe in.

Physics-informed neural networks generalize this

Physics-informed neural networks (PINNs) take this pattern and apply it across an entire class of problems. A normal neural network learns from examples. A PINN learns from examples plus equations — usually partial differential equations describing the system.

The training loss has the same Lagrangian shape. One term penalizes mismatch with observed data. Another term penalizes violation of the governing PDE at sampled points throughout the domain. A multiplier balances them.

For fluid flow, heat transfer, structural vibration, electromagnetic propagation — anywhere the underlying physics is well-understood but data is expensive to collect — PINNs routinely outperform pure data-driven models, especially in regions where you have no measurements at all. The equations fill in what the data cannot.

This is why AI in engineering, energy, manufacturing, and drug discovery will look different from consumer AI. The winning systems will not simply be larger models. They will be models that combine data, equations, and constraints, with multipliers tuned to the operational reality of the deployment.

The multiplier is governance

Step back from the math.

Every serious AI system has trade-offs. Accuracy versus fairness. Speed versus safety. Flexibility versus consistency. Profit versus risk. Automation versus human control. In most enterprise deployments, those trade-offs get litigated in meetings, encoded in policy documents, and then partially lost in translation when the model is built.

The Lagrange multiplier gives those trade-offs a formal place to live. A fraud model that trades a little recall for fewer false accusations has a λ\lambda λ on its precision constraint. A credit model that trades raw accuracy for stability across customer groups has a λ\lambda λ on its fairness term. A predictive maintenance model that trades sensor-noise fit for physical plausibility has the λ\lambda λ on its physics term.

When the trade-off is explicit, it becomes auditable. You can answer questions like: how much accuracy did we give up to satisfy the fairness constraint? What happens if regulators tighten the constraint next year? Where on the frontier are we operating, and is that the right place?

This is not a small thing. As AI moves from chatbots into operational systems — pricing loans, diagnosing equipment, managing grids, making medical recommendations — the cost of an unconstrained mistake stops being annoying and starts being expensive. Constraints, with prices attached, are how you keep those systems inside the regions where they're allowed to operate.

The lesson

The first era of modern AI rewarded scale: more data, more parameters, more compute. That playbook is not going away, but it is no longer enough on its own. The harder questions now are about behavior, not capacity. Can the model stay inside its operating region when the data shifts? Can it avoid recommendations that violate physics or policy? Can it explain why a constraint mattered for a given decision?

These are the questions Lagrange's framework was built for. Not because modern AI is secretly celestial mechanics, but because both problems share a structure: a system with too many degrees of freedom, governed by rules that constrain which configurations are actually allowed, where the interesting solutions sit at the balance points between competing forces.

Lagrange found stable points in a gravitational field by taking the constraints seriously. The next leap in applied AI will come from doing the same thing — building models that don't just fit the data, but understand what they are not allowed to break.

Comments